Securing Distributed AI Workloads with Equinix and Palo Alto Networks

1.0 SECURE ENTERPRISE DISTRIBUTED AI HUB

Artificial intelligence has rapidly evolved from an emerging capability to a core component of modern digital ecosystems. As organizations deploy generative AI, machine learning models, and autonomous decision-making AI agents, they face an expanding set of security challenges that differ fundamentally from traditional cybersecurity risks. AI agents are highly dynamic, data-driven, and nondeterministic, which introduces new attack surfaces and vulnerabilities that conventional security frameworks were not designed to address.

At the same time, AI has become a powerful tool for defenders, enhancing threat detection, automating incident response, and improving cloud security posture. However, malicious actors are also exploiting AI to scale cyberattacks through techniques like deepfakes, automated phishing, prompt injection, goal hijacking, and model poisoning. This dual-use nature of AI underscores the urgent need for organizations to adopt integrated approaches that focus on both AI for security and security for AI.

AI adoption is accelerating across industries, often outpacing governance and oversight. Many enterprises are unknowingly exposing themselves to significant risk through shadow AI, unmonitored AI endpoints, unvetted tools, and models integrated into workflows without proper security controls. These vulnerabilities can lead to data leakage, compliance violations, and misuse of sensitive data. As highlighted by real world incidents where chatbots provided false commitments or exposed regulated data, improper AI configuration can have financial, reputational, and legal consequences.

Securing AI requires a multilayered strategy that addresses the entire AI lifecycle from responsible data sourcing and model training to deployment, monitoring, and incident response. Leading guidance recommends:

- Understanding model behavior and verifying outputs

- Implementing strong access controls and auditing mechanisms

- Protecting training data and preventing model tampering

- Integrating AI with cloud security best practices

- Ensuring continuous human oversight for high-impact AI decisions

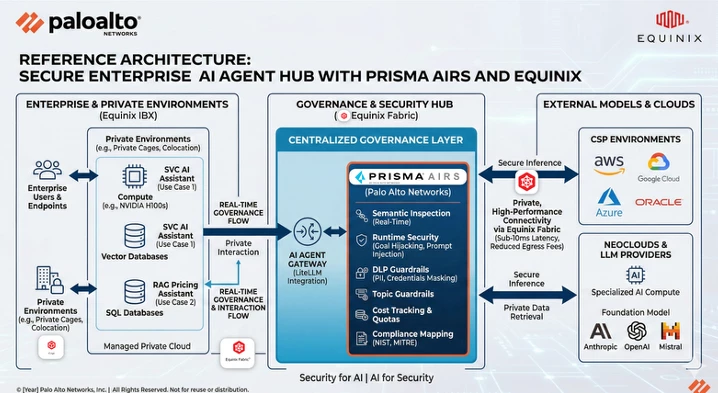

At Equinix, we have partnered with Palo Alto Networks AI Runtime Security to jointly develop a “Secure Distributed AI Hub solution”. This white paper is intended as a reference architecture for securing customer AI workloads on the using the Equinix platform of product and services

2.0 Current AI Security Challenges

With their rich data interactions and complex, often unpredictable model behaviors, GenAI applications are quickly becoming prime targets for exploitation. The security challenges associated with GenAI include the following:

- Application protection—Attackers use a range of different methods to gain access and control over application services. Implementing security measures such as service segmentation, advanced threat protection, URL filtering, and database query restrictions is critical to defend against unauthorized access and data breaches in AI Applications.

- Model protection—Securing the LLM models presents a significant challenge in preventing malicious code, deserialization attacks, and thwarting malicious code generation to safeguard the integrity and functionality of the models.

- Data protection—GenAI models require large amounts of data to train, which may include sensitive information. GenAI data protection involves safeguarding the data used for training, fine-tuning, and running generative models to ensure the privacy, integrity, and security of the information. It also includes inspecting application service communication in and out of the data layer to prevent exploits such as database corruption or database poisoning, unmoderated content, and exfiltration of sensitive or classified data

3.0 Understanding the Unique AI Attack Surface

Unlike traditional cybersecurity focused on networks and data, AI systems face unique vulnerabilities that exploit how these systems learn and operate. To effectively protect AI systems, we need to understand the specific ways attackers target them.

3.1Data Poisoning Attacks

Data poisoning is where attackers secretly insert harmful inputs into training data, compromising AI systems before they're even deployed. Think of a company developing a content moderation AI that unknowingly trains data containing subtle patterns, later causing the system to allow harmful content through. This resembles contaminating ingredients before a recipe is made rather than tampering with the finished dish

3.2 Prompt Injection Attacks

AI systems are vulnerable to carefully worded inputs that can override safety measures, aka prompt injection attacks. When your AI assistant visits a website containing hidden instructions, these commands might redirect the AI's behavior without your knowledge — modifying shopping orders, revealing sensitive information, or worse.

3.3 Model Deserialization Attacks

When AI models are packaged for storage or sharing, attackers can embed malicious code within them. When loaded by your application, this hidden code activates — like opening what appears to be a legitimate document that secretly installs malware on your computer.

3.4 Autonomous AI System Risks

Autonomous, agentic AI systems present additional risks due to their ability to make independent decisions and take actions. Imagine your AI home assistant interacting, with a fraudulent website that embeds hidden commands in its responses. These instructions override the AI's safety protocols, causing it to secretly order unauthorized items when performing routine tasks — all without your knowledge or detection until significant damage

3.5 OWASP (Top 5 for Agentic Applications (2026)

| AS101-Agent Goal Hijack | Attackers manipulate an agent’s objective altering its reasoning or task selection, so the agent performs unintended or harmful actions |

|---|---|

| AS102-Tool Misuse & Exploitation | Agents can be tricked into invoking tools, APIs, or system functions in dangerous ways, resulting in unauthorized data access, file deletion, or harmful downstream actions |

| AS103-Identity & Privilege Abuse | Agents often inherit human or system credentials. Weak boundaries or leaked tokens enable privilege escalation and unauthorized system access. |

| AS104-Agentic Supply Chain Vulnerabilities | Agents rely on plugins, extensions, datasets, APIs, and other agents. Any compromised upstream component can cascade into the entire agent ecosystem. |

| AS106-Memory & Context Poisoning | Agents store persistent memories or working context. Attackers can inject harmful data that influences decisions, leading to long-term behavioral corruption |

5.0 Essential Capabilities

As technology rapidly changes, organizations need a complete connectivity, security and visibility solution for Agents, Models, GenAI Applications and public cloud systems.

To protect GenAI applications effectively in the public cloud, the solution must include these key security capabilities:

- Protect GenAI applications from threats, data loss, and data exfiltration.

- Identify running virtual machines and container application resources

- Provide visibility and control of outbound, east west, and inbound traffic, regardless of the workload type, the application, port, or protocol used.

- Centralized management of security policies across a multi-cloud environment.

- Centralized logging for visibility and analysis.

Palo Alto Networks’ blog, “Building Secure AI by Design: A Defense-in-Depth Approach,” explains how organizations can apply secure‑by‑design principles and layered controls across the AI lifecycle to mitigate emerging GenAI threats (Palo Alto Networks Blog).

6.0 Equinix Enterprise AI Platform Architecture

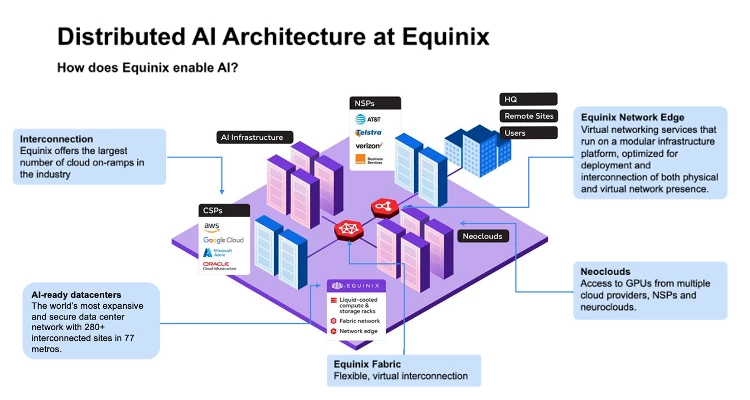

One of the major challenges in enterprise AI applications is processing and aggregating data collected from multiple geographies around the world. AI development requires access to large GPU clusters and distributed data gravity of massive datasets, sourced from diverse systems to support model training and neural network development.

GPU scarcity on traditional hyperscalers has customers looking for large capacity of GPUs from Neo Clouds providers with InfiniBand / RoCEv2 networks for multinode training and high-performance storage and low latency fabrics.

Customers consume Cloud Service Providers pre-trained model via API and need their enterprise specific domain relevant datasets fine-tuned in their AI applications. Handling sensitive data often involves stringent security and privacy regulations.

Enterprise AI application development requires interconnectivity to their enterprise data, CSP, Neo Clouds, and low network latency to CSP for their optimum user performance.

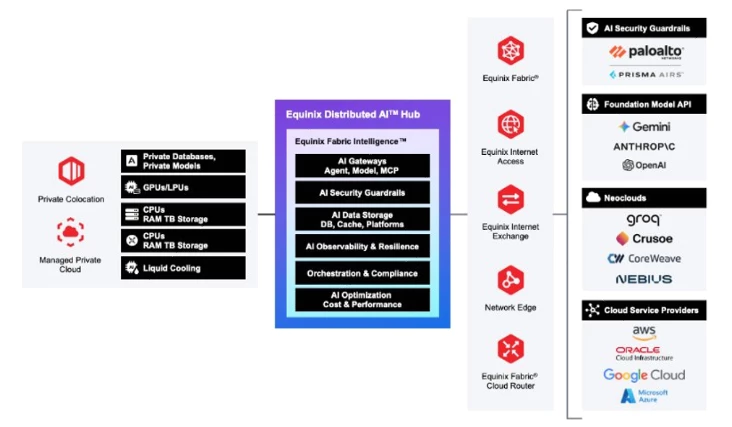

For enterprises that want to realize greater business value and maximize the potential of AI-ready digital infrastructure, Equinix offers “Distributed AI solutions’ that effectively harness the power of AI, while allowing enterprises to maintain full control over their data, ensure compliance and benefit from advanced connectivity and cooling.

We, at Equinix, accelerate customer AI innovation.

Equinix’s global footprint plays a critical role in supporting Distributed AI solutions with 270+ International Business Exchange™ (IBX®) data centers in 77 major metros around the world.

Equinix offers the most complete, interconnected and vendor-neutral AI ecosystem in the industry. With access to customers, partners and multi-cloud connectivity across clouds, platforms and services, organizations gain the freedom to use the best tools for every phase of AI. Customers benefit from access to our ecosystem of 10,000+ enterprises including 2,000+ networks and 3,000+ cloud and IT service providers, supporting efficient data transfer and collaboration.

They can place AI infrastructure close to their data sources with direct high-speed connectivity to multiple clouds. This extensive network enables easy interconnection and support for strategic inference locations at the digital edge, training where compute is abundant, while also tapping into cloud services as needed. This reduces latency and enhances performance for AI training and inference.

Additionally, Equinix’s data centers are powered by 96% renewable energy, supporting sustainable global digital infrastructure, and enabling customers to meet their climate action goals and targets too.

For customer data subject to Data sovereignty laws and regulations of the country of residence, Equinix Distributed AI gives customers the ultimate control, privacy and security over their data. This technical reference addresses AI Security implementing solution with Palo Alto Networks Prisma AI Runtime.

7.0 Design and Architecture: Distributed AI Agent Hub

This design pattern establishes AI Agent Hubs at strategic Equinix 270 + IBX locations, creating a centralized governance layer between your enterprise environment and your AI services. Rather than each application directly integrating with multiple providers, all traffic flows through a secure, optimized hub that enforces policy, tracks cost and manages connectivity.

7.1 Core Components

7.1.1 Optimized Interconnection: Equinix

Purpose: Provide secure, high-performance connectivity optimized for AI Workloads.

Key Capabilities:

Equinix IBX:

- 270+ data centers across 77 major metros globally for data sovereignty

- Colocation at the edge

- Physical security and reliability for mission-critical AI workloads

Equinix Fabric:

- Private, low-latency connectivity to 3000+ service providers

- Software defined, on-demand connectivity without physical cross connects

- Bandwidth scaling as AI usage grows

Performance Benefits:

- Sub-10ms latency to major AI and cloud providers in key metros

- Dedicated bandwidth eliminates public internet congestion

- 99.999% uptime+ for business-critical applications

- Geo-fenced routing paths for data residency requirements

- Eliminate public internet dependencies for critical AI workloads

Cost Benefits:

- Reduce cloud egress fees 50%-70%

- Bandwidth scaling and software defined connectivity to optimize spend and scale as needed.

7.1.2 AI Security & Governance: Palo Alto Networks Prisma AI Runtime Security

Palo Alto Networks’ Prisma AIRS provides real-time protection for AI applications by inspecting every prompt and response flowing through your AI infrastructure for input prompts as well as for output responses

Unlike traditional security tools that only see network traffic patterns, Prisma AIRS understands the semantic content and intent of AI conversations; detecting threats that would otherwise be invisible to perimeter defenses.

Prisma AIRS Runtime Security continuously monitors AI traffic to detect and block threats in real time:

- Prompt Injection & Jailbreak Prevention: Identifies attempts to manipulate AI models through crafted inputs designed to bypass safety controls, extract system prompts, or alter model behavior. Detection covers direct injection, indirect injection via retrieved content, and multi-turn manipulation techniques.

- Data Loss Prevention: Inspects prompts and responses to detect sensitive data including PII, credentials, API keys, trade secrets, and proprietary code. Configurable policies can block, mask, or alert based on data classification, user role, and destination. DLP profiles can be customized to match organizational data taxonomies and regulatory requirements.

- Malicious Content Detection: Blocks malicious URLs, toxic content, and harmful code generation. Database security controls prevent SQL injection and other query manipulation attacks targeting AI applications with data access.

- Custom Guardrails: Define organization-specific policies to enforce brand guidelines, restrict topics, and ensure AI outputs align with business requirements. Policies can be tailored by application, team, or use case.

7.1.3 Unified AI Gateway (LiteLLM) - centralized AI management with built-in governance

Purpose: Provide a single, standardized interface to all LLM providers and MCP services while adding enterprise controls and optimization.

Key Features:

- Unified API: Single, OpenAI compatible interface for all providers

- Enterprise Controls: Team-based access, rate limiting

- Cost Management: Real-time cost tracking, budget controls

- Observability: Detailed request logging, model usage logging

- MCP Support: Integrated Model Context Protocol gateway for tool/data access

Prisma AIRS integrates natively with LiteLLM, enabling security inspection at the gateway layer without requiring changes to downstream AI applications. This architecture ensures consistent policy enforcement across all models and providers while maintaining the performance characteristics required for production AI workloads.

Prisma AIRS integrates natively with LiteLLM via API, enabling seamless security inspection without introducing additional latency or architectural complexity. The integration operates transparently:

- Inbound inspection: Prompts flowing through LiteLLM are inspected by Prisma AIRS before reaching the model provider. Threats are blocked, sensitive data is masked or redacted, and policy violations are logged.

- Outbound inspection: Model responses are inspected before returning to the application. Harmful content, data leakage, and policy violations in generated outputs are detected and handled according to policy.

- Unified logging: Security events from Prisma AIRS are correlated with LiteLLM's operational telemetry, providing a complete audit trail of AI usage across cost, performance, and security dimensions.

| Layer | LiteLLM | Prisma AIRS |

| Asset Control | Team-based API keys, rate limiting | Policy enforcement by user, role, data classification |

| Cost Management | Real-time spend tracking, budget alerts | N/A |

| Routing | Model selection, failover, load balancing | N/A |

| Security | N/A | Threat detection, DLP, guardrails, agent monitoring |

| Compliance | Request/response logging | Security event logging, framework mapping (OWASP, NIST, MITRE) |

| Observability | Latency, token usage, error rates | Security metrics, policy violations, risk scoring |

Purpose: Enforce consistent security policies across all AI interactions before they reach external providers.

Key Features:

- Threat Detection: Identify and prevent AI-specific attacks (prompt injection, jailbreaking, data exfiltration attempts)

- Data Loss Prevention: Inspection of prompts/responses to detect and block sensitive data (PII, credentials, trade secrets, proprietary code)

- Policy Enforcement: Apply policies based on data classification, team, and destination.

Compliance: logging of AI interactions for regulatory compliance and incident investigation

Why This Matters: Traditional security tools can’t see inside AI conversations. This layer provides AI-aware inspection that understands the context and intent of prompts and responses, not just the network traffic patterns.

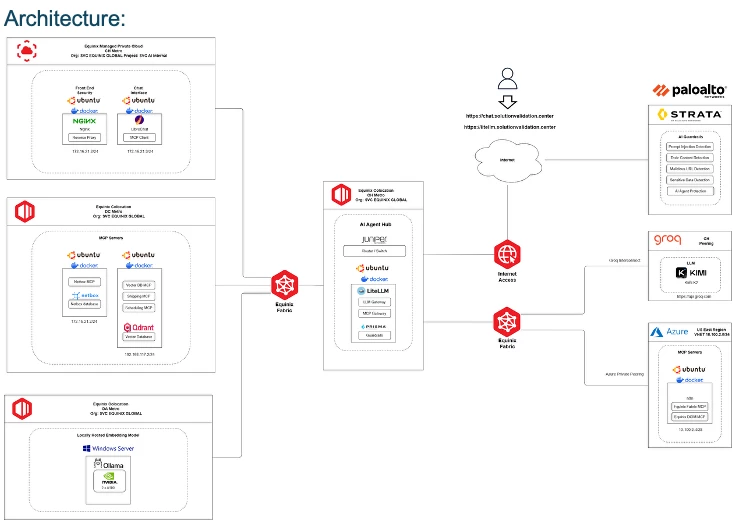

7.2 AI Application Use Cases on Distributed AI Hub

7.2.1 Use Case 1: The Solution: SVC AI Assistant

An Internal chatbot powered by the AI platform that helps sales and project management to quickly identify available infrastructure, product resources and technical expertise, then automatically reserve resources and coordinate schedules.

As shown in this the Distributed AI hub deploys a Lite LLM which is integrated with Palo alto Prisma AIRS as discussed previously in sections 7.1.2.and 7.1.3

The data flow and working of the AI security via Prisma AIRS integration can be viewed in this demo.

7.2.3 Use Case 2: Pricing RAG Assistant for E-commerce

A future solution for ecommerce enterprise segment is being worked in Equinix ‘s Solution Validation Center. This agentic AI application is built on Equinix Distributed AI HUB with a fully private, AI driven RAG Assistant that will access propriety enterprise inventory, pricing information combining internal pricing, margin, and inventory data with Realtime competitor intelligence.

Security access to this Agentic AI application will be provisioned via AI hub’s Lite LLM gateway while keeping all vector search, LLM inference, and data orchestration within a secure Managed Private Cloud environment. This ecommerce application delivers dynamic, competitive pricing recommendations without risking sensitive information. Powered by Equinix SVC, secured by Palo Alto Networks Prisma AIRS, Groq LPUs, and the Dify agent platform, the solution provides sellers with fast, compliant, and reliable insights, enabling sharper market responsiveness and stronger competitive positioning. commerce enterprise driven RAG Assistant time competitor intelligenc